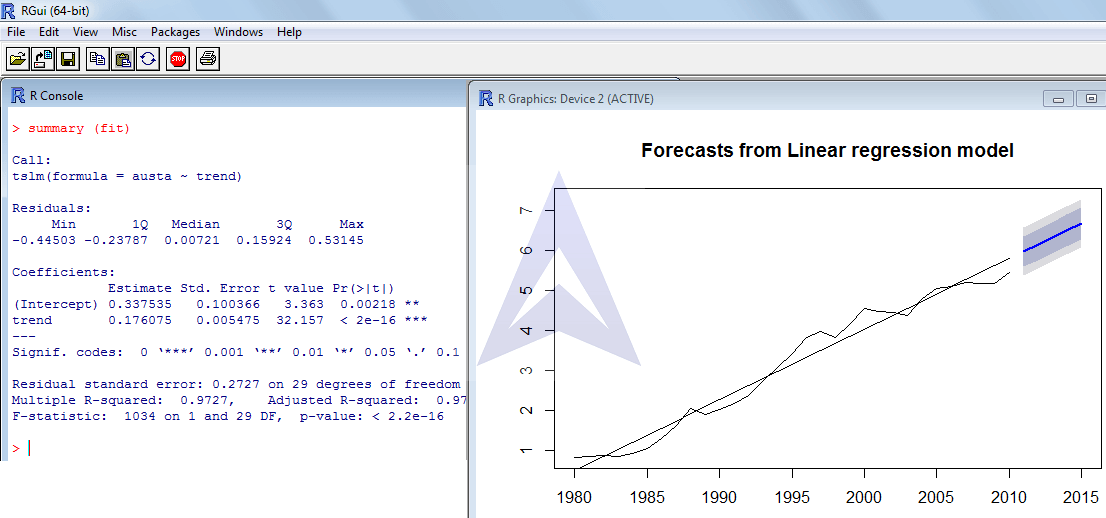

We can also use the predict( ) function as we did with the linear regression model above. Notice that in the first column R reports estimates of our model parameters: \(\hat=-4.037\). # F-statistic: 73 on 2 and 97 DF, p-value: <2e-16įirst, focus on the “Coefficients” section. # Multiple R-squared: 0.601, Adjusted R-squared: 0.593 The regression model in R signifies the relation between one variable known as the outcome of a continuous variable Y by using one or more predictor variables as X. # Residual standard error: 0.892 on 97 degrees of freedom R language has a built-in function called lm () to evaluate and generate the linear regression model for analytics.

Lm.fit = lm(Y ~ X1 X2) summary(lm.fit) #

Once we have fixed the true values of the parameters and generated predictor variables and the error term, the regression formula above tells us how to generate the response variable \(Y\). To generate data from this model, we first need to set the “true values” for the model parameters \((\beta_0, \beta_1, \beta_2)\), generate the predictor variables \((X_1, X_2)\), and generate the error term \((\varepsilon)\). Let’s consider a simple example where we generate data from the following regression model. Leverage statistics and follow our step-by-step tutorial. We’ll start with linear regression, which assumes that the relationship between \(Y\) and \(X_1,\dotsc,X_p\) is linear. Learn about linear regression a statistical model that analyzes the relationship between variables. Regression models are useful tools for (1) understanding the relationship between a response variable \(Y\) and a set of predictors \(X_1,\dotsc,X_p\) and (2) predicting new responses \(Y\) from the predictors \(X_1,\dotsc,X_p\).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed